What makes it different

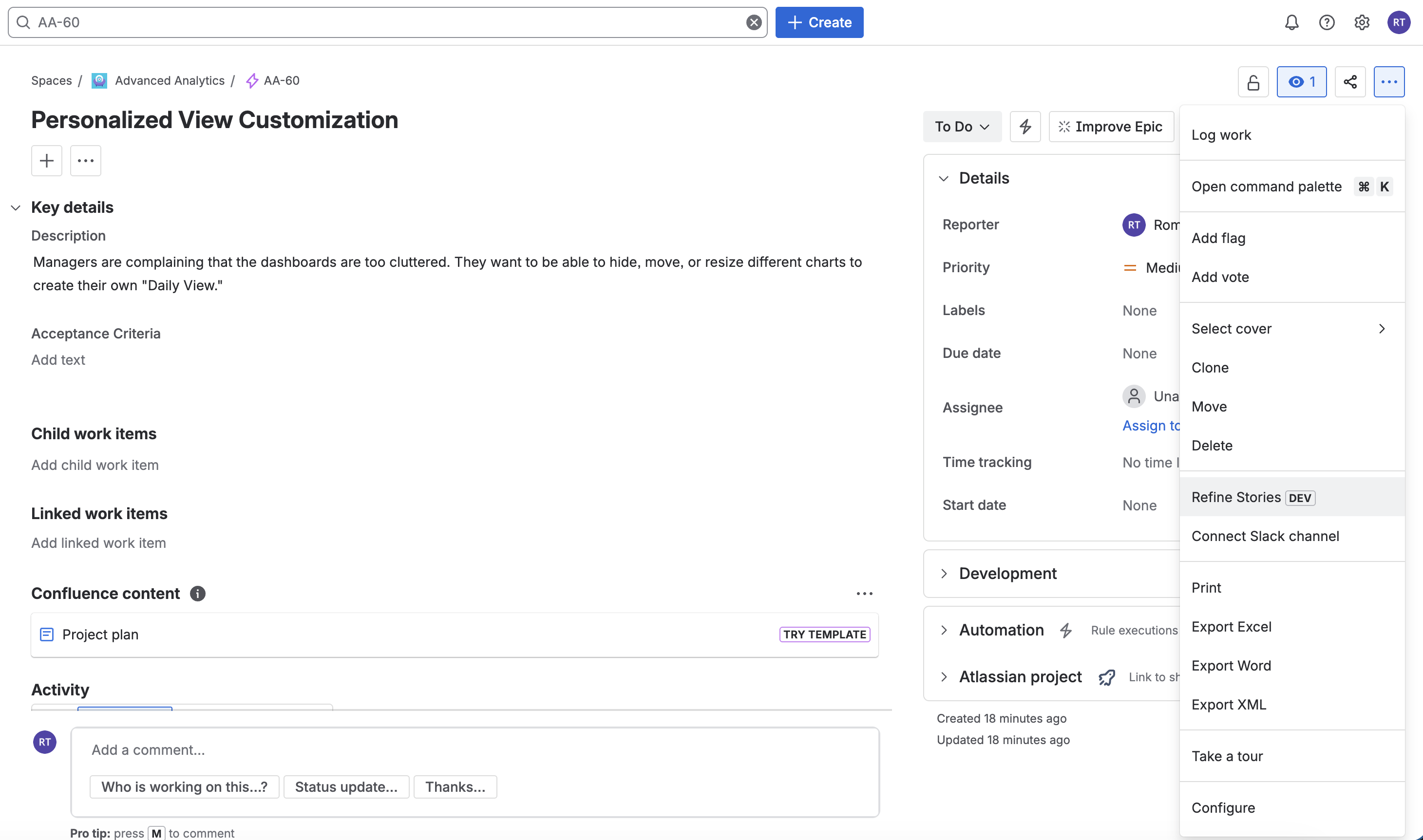

Not just AI. AI that knows your project.

Every feature is grounded in your existing backlog, your work instructions, and your team's writing style — not generic prompts.

Asks Before It Assumes

Instead of guessing, Refinely initiates a discovery session to surface requirements, roles, and edge cases — the exact gaps that cause rework when the dev team finds them first.

Scales to Your Ticket's Size

A two-line spike gets a quick clarification and a handful of stories. A large Epic triggers a multi-stage discovery session. Refinely reads the complexity and adjusts automatically.

Knows Your Backlog Already

Refinely reads your existing Jira stories before it writes a single word, so the structure, terminology, and tone stay aligned with how your team already writes.

Uses Your Work Instructions

Upload your SOPs, process guides, or standards documents as PDF, Excel, CSV, markdown, or plain text. When a ticket relates to that process, Refinely pulls the right sections before generating.

Test-Ready Acceptance Criteria

Every story comes with structured GIVEN / WHEN / THEN criteria — validated against common gaps before you ever see the output. Developers get what they actually need to build.

A Canvas to Refine Your Way

Work on a full-screen canvas. Edit any story individually, or ask the AI to update ten at once: "simplify the language" or "add a timeout scenario to every payment story."

See Every Change Before Pushing

Before anything goes to Jira, Refinely shows a word-by-word diff between your original and the AI's output. Nothing sneaks past you — every addition and removal is highlighted.

Your Domain, Your Language

Configure your business domain, actor roles, and process taxonomy once. Every output respects your organisation's vocabulary — not generic tech jargon.

Push Directly to Jira

One click creates Stories, Epics, or Features in Jira — with full rich text, story links back to the source ticket, and your team's custom field values pre-filled.

No Hidden Compute Costs

Use your own Google Gemini, OpenAI, or Anthropic key. You pay your provider directly — only for what you use. Refinely stores your keys in Atlassian's encrypted secret storage.

Pick Up Where You Left Off

All sessions are saved automatically. Come back an hour later or a day later — your canvas is exactly where you left it, ready to continue.